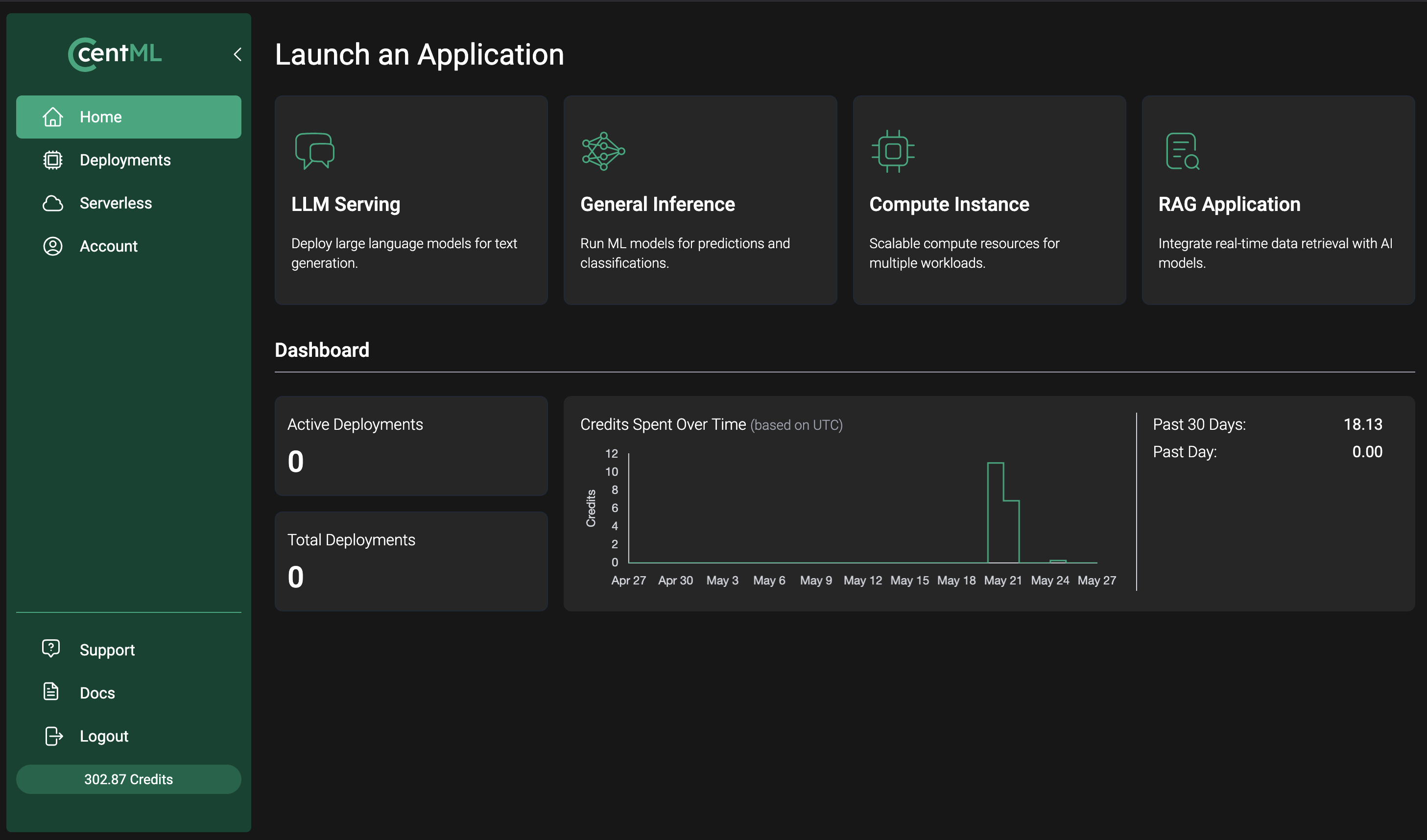

1. Log into the NVIDIA CCluster

To get started, sign in to the NVIDIA CCluster console using the access details provided by your NVIDIA team.

2. Create a bearer token

To interact with NVIDIA CCluster endpoints programmatically, you need a Bearer Token. Follow the Managing Vault Objects documentation to generate one.3. Deploy your first model

Choose the deployment type that fits your use case:- LLM Serving — Deploy dedicated public or private LLM endpoints tailored to your performance requirements and budget.

- General Inference — Deploy custom containerized models on NVIDIA-managed infrastructure.

- Compute — Provision GPU compute for training, fine-tuning, or batch workloads.

Additional support: billing, sales, and/or technical

For access, billing, sales, or technical assistance, follow our Requesting Support guide.What’s next

LLM Serving

Explore dedicated public and private endpoints for production model deployments.

Deploying Custom Models

Learn how to build your own containerized inference engines and deploy them on the NVIDIA CCluster.

Clients

Learn how to interact with the NVIDIA CCluster programmatically