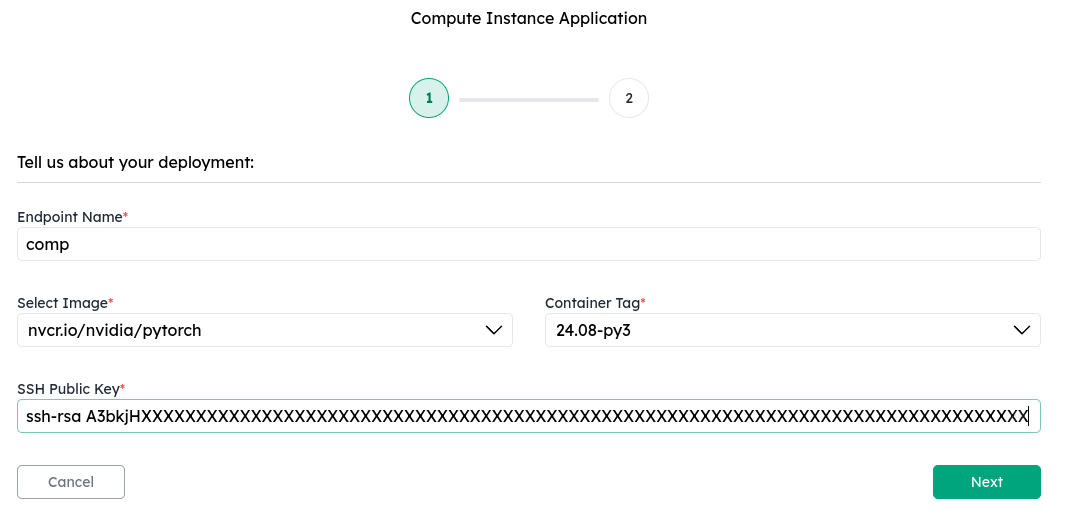

1. Select a base image

Spin up a compute instance by choosing one of the available base images:- PyTorch — the latest NVIDIA NGC PyTorch image, preloaded with PyTorch and CUDA libraries.

- Ubuntu — a vanilla Ubuntu image for a minimal starting point. On full GPU instances, NVIDIA drivers are included via driver passthrough. On MIG instances, NVIDIA drivers are not available yet.

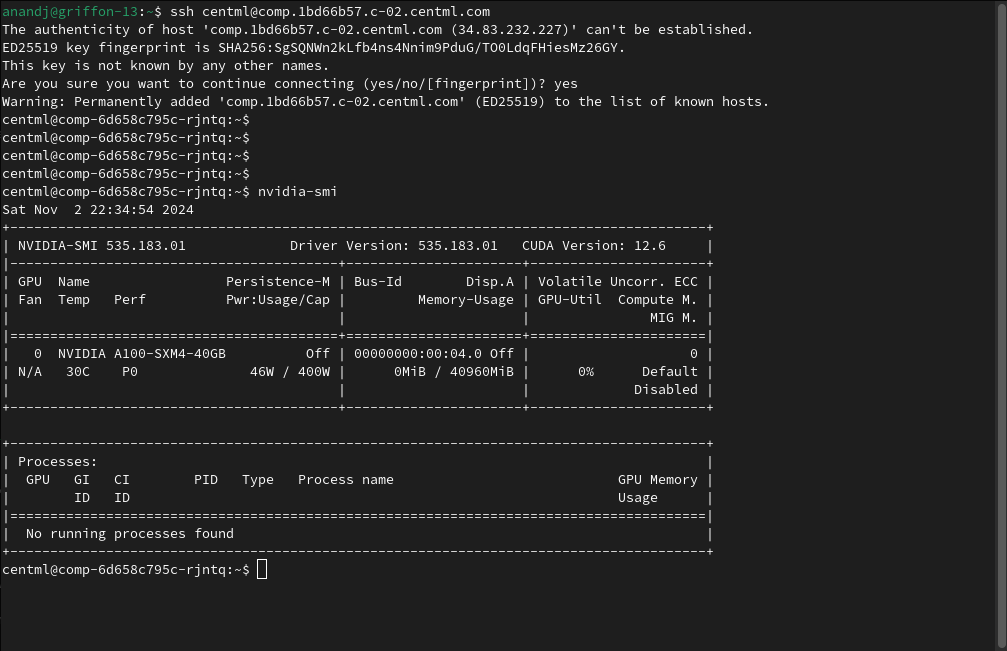

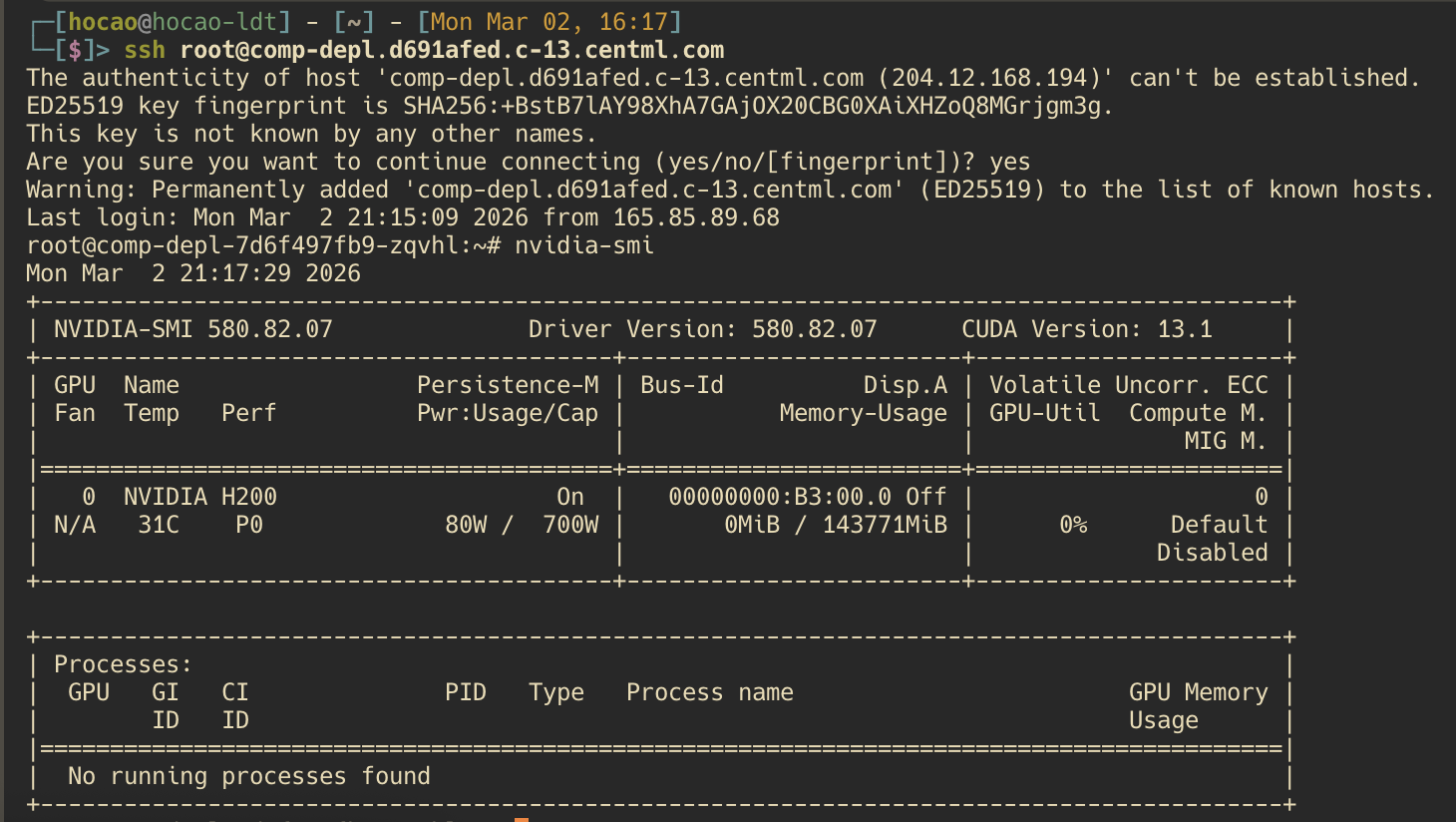

2. SSH into the instance

Once the instance is ready, navigate to the deployment details page. The Endpoint Configuration section displays:- Endpoint URL — the hostname for your instance. Next to it are the copy button (copies the URL) and the SSH button (copies

ssh root@<endpoint_url>so you can paste it directly into your terminal).

root user:

What’s next

Clients

Learn how to interact with the NVIDIA CCluster programmatically

Private Inference Endpoints

Learn how to create private inference endpoints

LLM Serving

Explore dedicated public and private endpoints for production model deployments.